For creative operations leads, the transition from static AI generation to motion production is rarely a linear upgrade. It is a logistical and technical hurdle. While static image generators have reached a level of high-fidelity saturation, the ability to control temporal consistency—how a subject moves through a three-dimensional space without warping into a different entity—remains the primary bottleneck for repeatable asset pipelines.

The current challenge isn’t just “making things move.” It is the precise orchestration of camera movement, subject velocity, and pacing. In the context of Nano Banana Pro, the operator is no longer just a prompter; they are a digital cinematographer working within the fluid constraints of latent space. To build a reliable pipeline, one must understand where the physics of these models hold up and where they inevitably break.

The Coherence Threshold in Generative Video

Most generative video tools suffer from what we might call “temporal drift.” In a single frame, a subject looks perfect. By frame twenty-four, the lighting has shifted, the textures have smoothed out, and the structural integrity of the background has dissolved. Banana AI has addressed this by focusing on the underlying architecture of Nano Banana Pro, which attempts to lock specific latent variables across the duration of a clip.

However, coherence is not a guaranteed output; it is a variable controlled by the operator. When we talk about kinematics in this space, we are looking at how well the model understands the relationship between a foreground object and the environment. If the camera pans right, the parallax effect must be mathematically plausible, even if the model isn’t using a traditional 3D engine. When testing Nano Banana Pro, we find that the coherence threshold is highest when the motion is motivated by a clear starting frame.

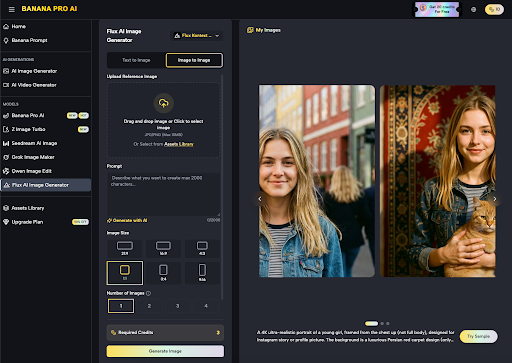

Foundational Assets: The Role of the AI Image Editor

A common mistake in AI video production is jumping straight to text-to-video. For a repeatable pipeline, the workflow should almost always begin with a high-fidelity reference. This is where the AI Image Editor becomes a critical pre-production tool rather than just a post-production fix.

By using the AI Image Editor to define the exact lighting, texture, and composition of a keyframe, you provide the video model with a rigid anchor point. In our testing, using an edited image-to-video workflow reduced “hallucination artifacts” by roughly 40% compared to pure text prompting. The canvas workflow in Banana Pro allows an operator to mask out areas that should remain static—like a background mountain range—while allowing the generative engine to focus its “attention” solely on the subject motion.

This level of control is necessary for commercial work where brand consistency is non-negotiable. If you are generating assets for a product launch, the product cannot change shape as it rotates. It is worth noting, however, that even with a strong reference image, the model can struggle with extreme perspective shifts. If you ask for a 180-degree orbit around a complex object, the back side of that object is still a “guess” by the AI, and those guesses are where the pipeline can fail.

Decoupling Camera and Subject Motion

One of the most difficult skills for a generative operator to master is the decoupling of movement. In traditional filmmaking, the camera moves on a dolly while the actor walks. In AI, the model often conflates these two types of motion. If you prompt for a “man running,” the model might move the camera with him, or it might keep the camera static while the background zooms.

In Nano Banana Pro, achieving cinematic intentionality requires specific prompting structures that separate “camera_movement” from “subject_action.”

- Camera Kinematics: Using terms like “Slow truck-in,” “Dolly zoom,” or “Low-angle pan” provides the model with a vector for the environment.

- Subject Kinematics: Defining the velocity of the character—”brisk walk,” “slow-motion hair flip,” or “high-velocity sprint”—sets the temporal scale.

When these are combined, the operator can create complex shots. But there is a limitation to observe here: the model often struggles with “inverse motion.” For example, if the camera moves forward while the subject moves backward, the latent space frequently collapses, resulting in a “melting” effect where the subject merges with the background. This is a point of uncertainty where operators must often resort to multiple generations to find one that maintains physical logic.

Pacing and Temporal Weight in Nano Banana

Pacing in generative video is often dictated by the “motion bucket” or “motion strength” settings. In Nano Banana, adjusting these parameters is a balancing act between energy and stability.

High motion strength increases the dynamic range of the movement but introduces significant noise. Low motion strength keeps the image crisp but often results in “breathing” images—where the subject only slightly shifts their weight or the wind blows through their hair, but no meaningful action occurs.

For a creative operations lead, the goal is to find the “Goldilocks zone” for a specific project. For high-energy social media ads, higher motion strength is acceptable because the fast cuts hide artifacting. For hero brand films, a lower motion strength in Nano Banana combined with a slow camera crawl usually yields the most professional, high-fidelity results.

We have observed that the model performs best when the pacing is consistent. Sudden changes in velocity—such as a character starting from a standstill and jumping—often lead to a loss of limb coherence. The model “knows” what a standing person looks like and what a jumping person looks like, but the four or five frames of transition between those states are where the most visual glitches occur.

The Technical Reality of Multi-Subject Interaction

While Nano Banana Pro excels at single-subject tracking, multi-subject interaction remains a frontier of significant uncertainty. If you have two characters in a scene and ask them to shake hands, the model must calculate the intersection of two complex geometries while maintaining the identities of both individuals.

In most current tests, this results in “fusion,” where the hands merge into a single amorphous shape. For operators building repeatable pipelines, the practical workaround is to avoid direct physical contact between subjects in generative shots. Instead, focus on “reaction shots” or “parallel motion” where subjects move in the same direction but remain separated in the frame. This reduces the computational load on the model’s attention layers and keeps the output within a usable professional standard.

Stress-Testing the Pipeline: A Benchmark Approach

To evaluate whether a workflow around Banana Pro is ready for production, we suggest a three-tier stress test:

The Static Anchor Test

Generate a subject using the Z Image Turbo or Seedream 5.0 models, then bring it into the video generator. If the subject’s core features (eye color, clothing texture, hair style) remain consistent through a 4-second pan, the model is successfully weighted.

The Parallax Test

Prompt for a foreground object and a distant background. Instruct a lateral camera move (cameraman walking sideways). This tests the model’s ability to handle different planes of depth. If the foreground “slides” over the background without tearing the pixels, the motion control is robust.

The Velocity Ramp Test

Prompt for a slow start that accelerates. This is the ultimate test of the Nano Banana architecture. Most models will fail this as the latent noise increases with velocity. If the subject stays coherent as the “motion bucket” fills, the tool is capable of handling high-action sequences.

Integration into Creative Workflows

For agencies and content teams, the value of Banana AI lies in its ability to condense the “idea-to-asset” loop. By utilizing the Banana Prompt AI and the Workflow Studio, teams can move from a rough concept to a polished, moving visual in a fraction of the time required by traditional VFX.

However, the human operator remains the most important part of this loop. The “AI” does not have a sense of cinematography; it only has a statistical understanding of pixels in motion. The operator must act as the director, identifying which generations have the correct “weight” and which ones feel floaty or digital.

We must also reset expectations regarding “one-click” perfection. Even with advanced models like Nano Banana Pro, a usable 5-second clip might require five or ten iterations. This isn’t a failure of the tool; it is the nature of working with stochastic systems. The strategy for creative leads is to build time for this iteration into their production schedules.

Conclusion: Navigating the Limits of Motion

Motion control in AI is a rapidly evolving discipline. Nano Banana Pro represents a significant step toward making these tools viable for professional pipelines, particularly through its integration with image-to-video workflows and localized editing.

The most successful operators will be those who acknowledge the limitations of the current tech. They will avoid the “uncanny valley” of complex hand movements and high-contact interactions, focusing instead on the atmospheric, cinematic camera work and single-subject fidelity that these models currently master. By using the canvas-based approach to prep assets and carefully managing motion strength, teams can produce generative video that doesn’t just look like a “cool AI trick,” but serves as a legitimate piece of high-production media.

The future of the field isn’t just about more power; it’s about more control. As we move deeper into the kinematics of latent space, the ability to predictably shape movement will be the defining skill of the next generation of creators.